Predictive maintenance is at an inflection point. It can deliver enormous value, and the technology is there to monitor entire plants. But too often something seems to go wrong between monitoring at scale and delivering value at scale.

Something needs to change.

One of the best ways to understand where predictive maintenance is heading is to study where other data-heavy industries have already been. This article explores how financial fraud detection and cybersecurity faced and overcame problems similar to those face today by predictive maintenance.

Simply put, predictive maintenance is dealing with too much information. Traditional predictive maintenance workflows can’t handle the volume of data from whole-plant monitoring. These will need to change to unlock the full value of predictive maintenance at scale.

Adopting behavior-based analytics is that change. Cybersecurity made it. Financial fraud detection made it. And now predictive maintenance is making the change too.

Read on to learn:

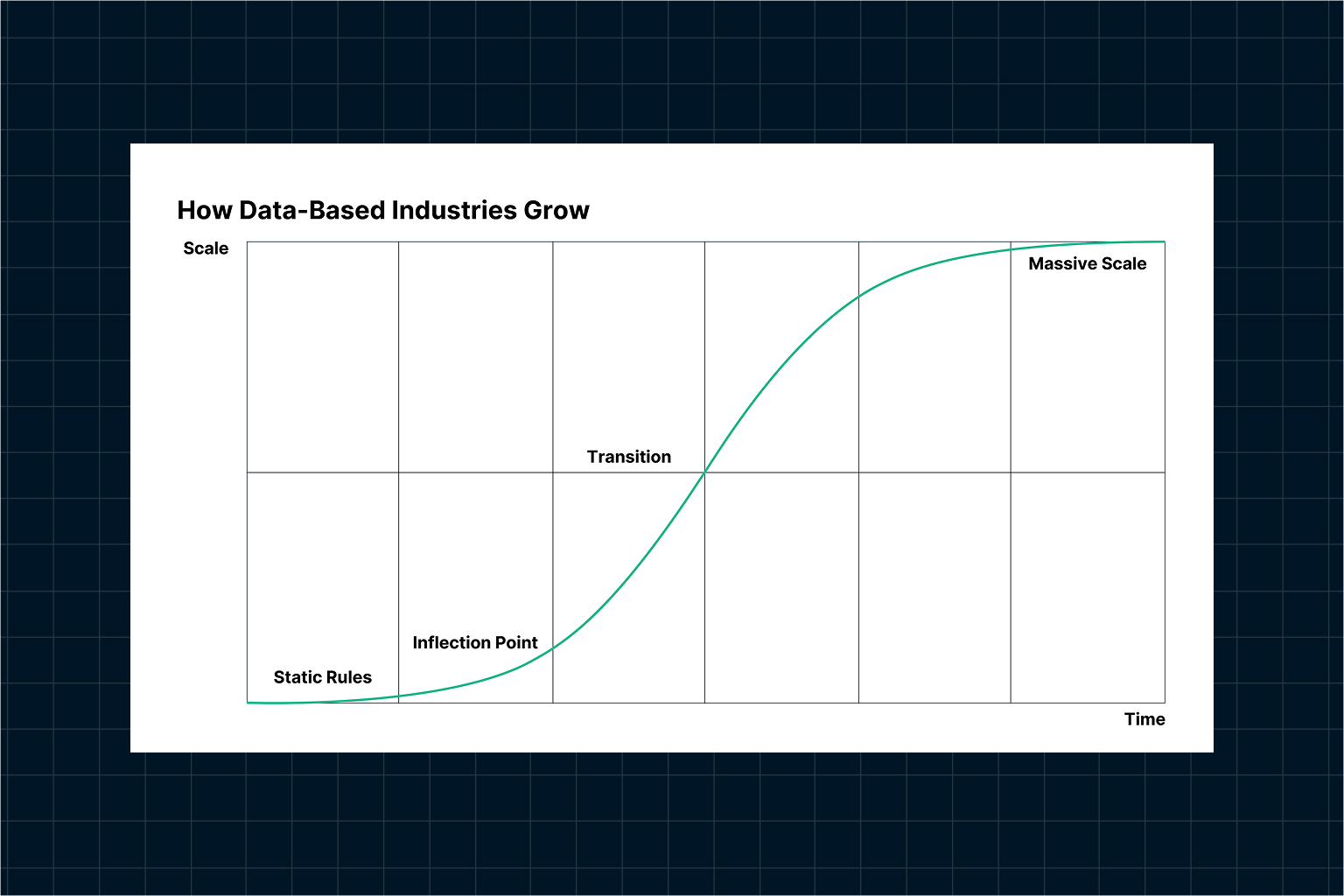

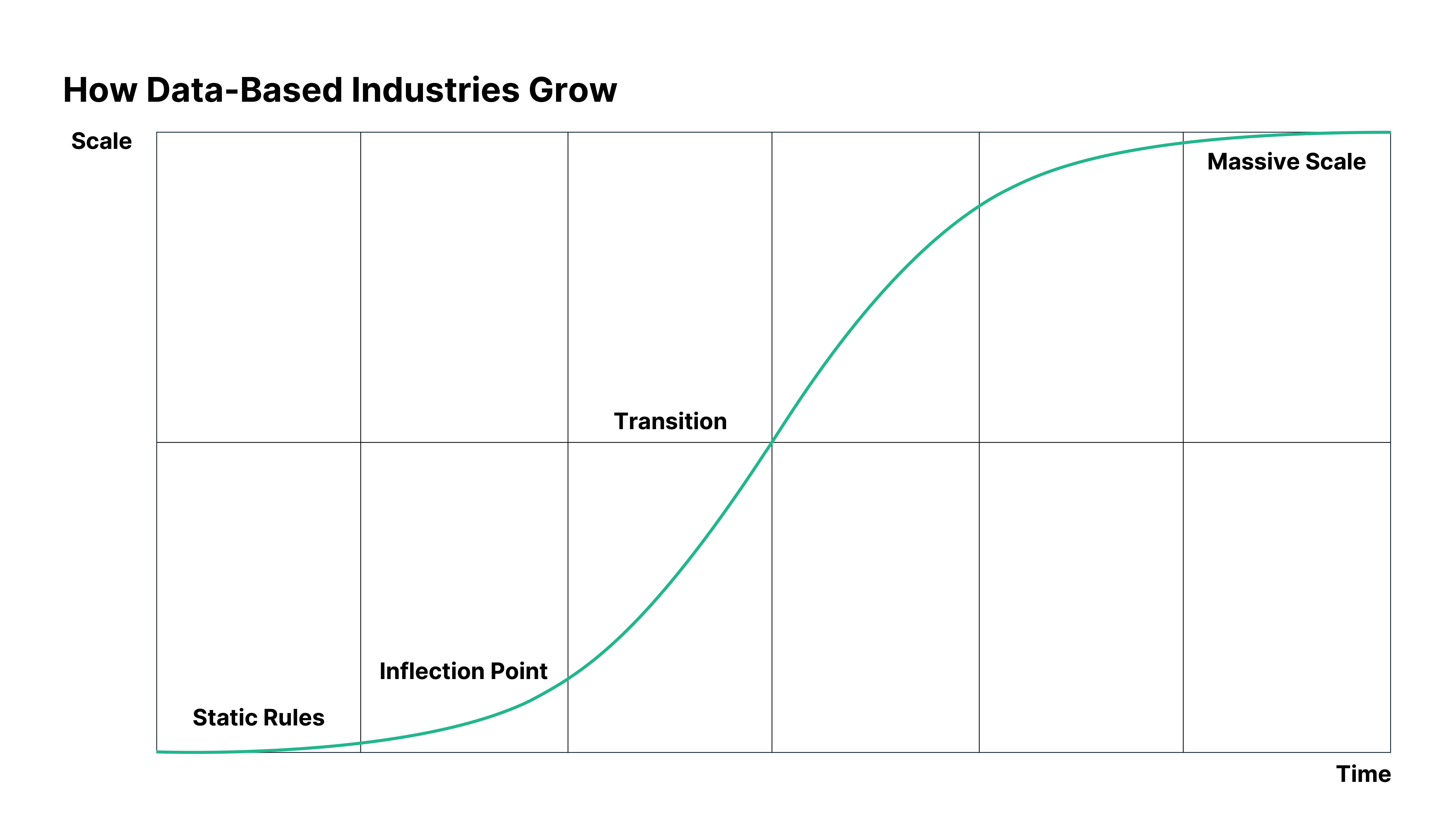

In data-driven fields, change always follows the same pattern.

This might be the end of the story. We can conclude that data-based decision making has limited, small-scale applicability.

But that’s clearly not true. The global data analytics market was valued at $ 82.23 billion in 2025, and it’s projected to grow five-fold by 2034. There’s nothing small scale or limited about that.

So, how do these fields keep growing?

The short answer is, they adapt. Static limits give way to learning systems with dynamic baselines. These generate fewer alerts. The alerts they do generate are far more accurate. Analysts can refocus on meaningful data. Scalability increases.

At first very slowly, then all at once, the field grows. Learning systems quickly replace static limits and the field can scale freely.

You’ll notice it’s an S curve. This is the most important curve in the world: the shape of all kinds of phase transitions, from the probability of an electron flipping its spin as a function of an applied field, to the collapse of empires.

At first, change is slow. Then it hits an inflection point—a point where rapid change begins (note that while we are looking at a curve on a graph, this is not the mathematical inflection point where the curve changes from concave to convex). This begins the period of transition, when new systems replace old. Finally, the transition completed, the field operates at massive scale.

In Six Rules for Effective Forecasting, Paul Saffo advises that we “Look for the S Curve”. Once we start looking, we find them everywhere—even within other S curves. The agonizing early days of slow growth are, in fact, small S curves: hard-won victories that stack infinitesimally, coming closer and closer until they reach the inflection point and change is undeniable. Finding these precursors are key to accurately identifying the inflection point when it comes.

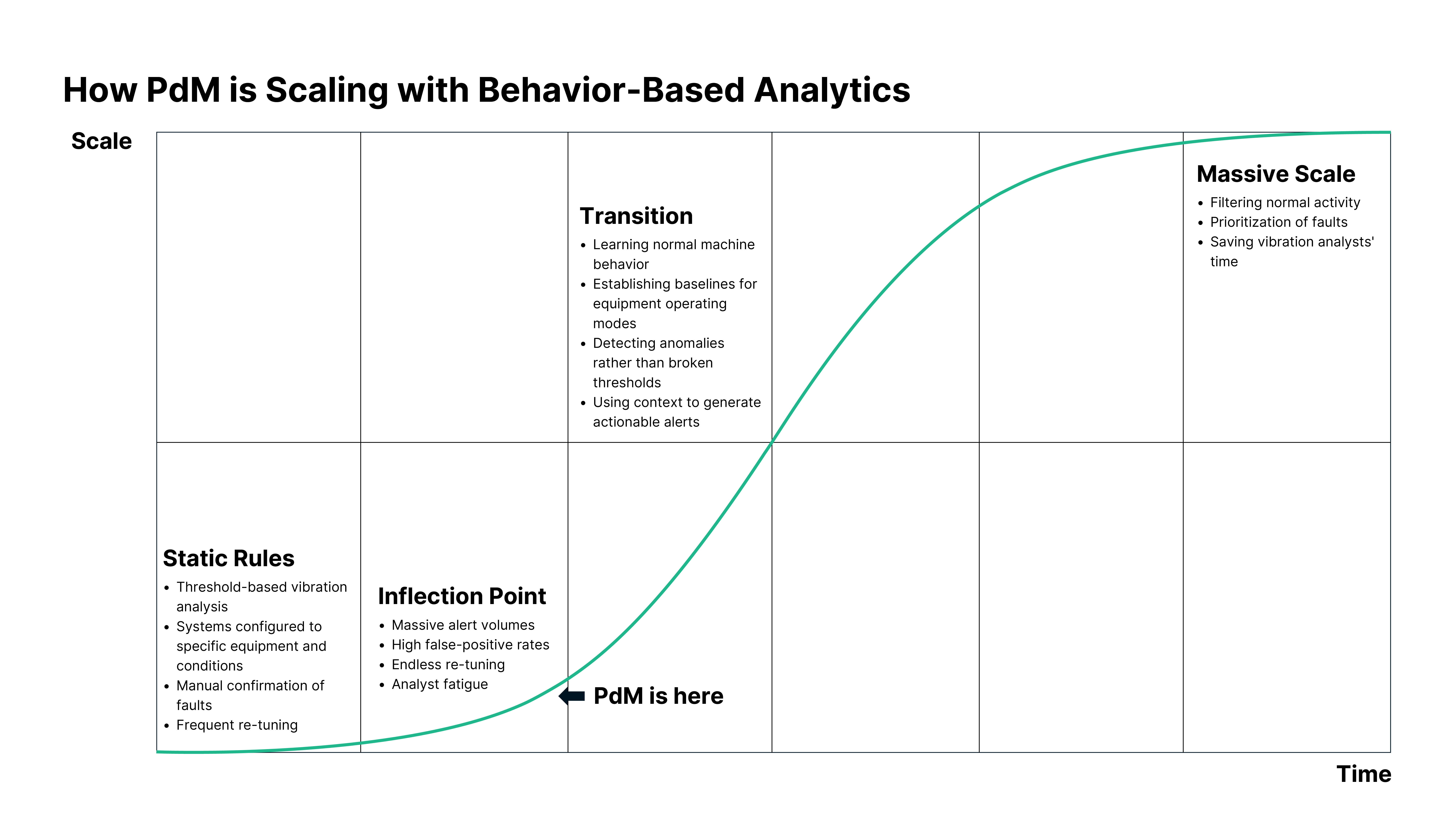

Right now, predictive maintenance is at that inflection point.

After decades of incremental advances based on in-person inspections, oil analysis, and hand-held vibration sensor data, twin enabling technologies in the form of better, cheaper sensors and wireless connectivity have pushed the scale of data beyond the level static rules can handle.

Most condition monitoring systems still rely on heavy configuration that includes selecting machine categories, defining ISO zones, setting baselines, validating operating modes, and constantly tuning thresholds. The static rules this yields work, but only in a narrow set of conditions.

Wireless vibration sensors have opened new possibilities for continuous monitoring of critical assets and balance of plant, but this produces an increase in the scale and complexity of available machine data. This is exactly the scenario that pushes static rules outside of the conditions in which they were designed to operate.

In practice, 1000 sensors can generate 500 alarms in a single month based on static rules. Nearly all are false alarms. Constantly crying wolf increases the cost of maintenance by 30 %. Meanwhile, it has the knock-on effect of missed failures and increased downtime.

The rules have, time and time again, failed. Vibration analysts are overloaded with false alarms. Maintenance teams are overloaded with manually verifying them. Trust in condition monitoring systems is collapsing.

This is the trap that kills too many remote monitoring systems as they attempt to scale. Pilot projects, based on a small number of sensors managed by static rules, can go well. But when plants roll out more sensors, covering machines operating at variable speeds and loads, in transient modes, or under varying operating conditions, the static rules break.

Suddenly, multi-million-dollar sensor systems look like problems rather than assets.

There is a way forward. Enabled by advances in machine learning and cloud-based computing, behavior-based analytics are replacing static thresholds, opening up a period of transition to massive scale.

Behavior-based analytics fundamentally reframes the problems it solves. Rather than searching through data for known problems, behavior-based analytics builds an understanding of typical behaviors in all their variety and differing contexts.

The key insight for predictive maintenance is that there is no universal reference for machine health. The strongest indicator of a problem is deteriorating condition, rather than operation outside a designated norm or even the mere existence of a fault. Machines can operate for years with minor faults. If they need repairing, they will either get worse or cause other faults. Both are a change of condition that behavior-based analytics notices.

This solves a key problem with searching for information at scale. Finding a needle in a haystack, as it were, is harder the larger the haystack. But a single needle may not be a problem in a growing haystack. By understanding both the needle and the haystack, behavior-based systems can effectively tell when the former is becoming a problem.

The behavior-based advantage goes further. Beyond a simple affirmation of problems, behavior-based analytics can back its discoveries with context-rich information learned through understanding behavior. That means better accuracy, fewer false alarms, diagnostic information, and actionable insights.

Calculate the ROI on behavior-based analytics in your plant here.

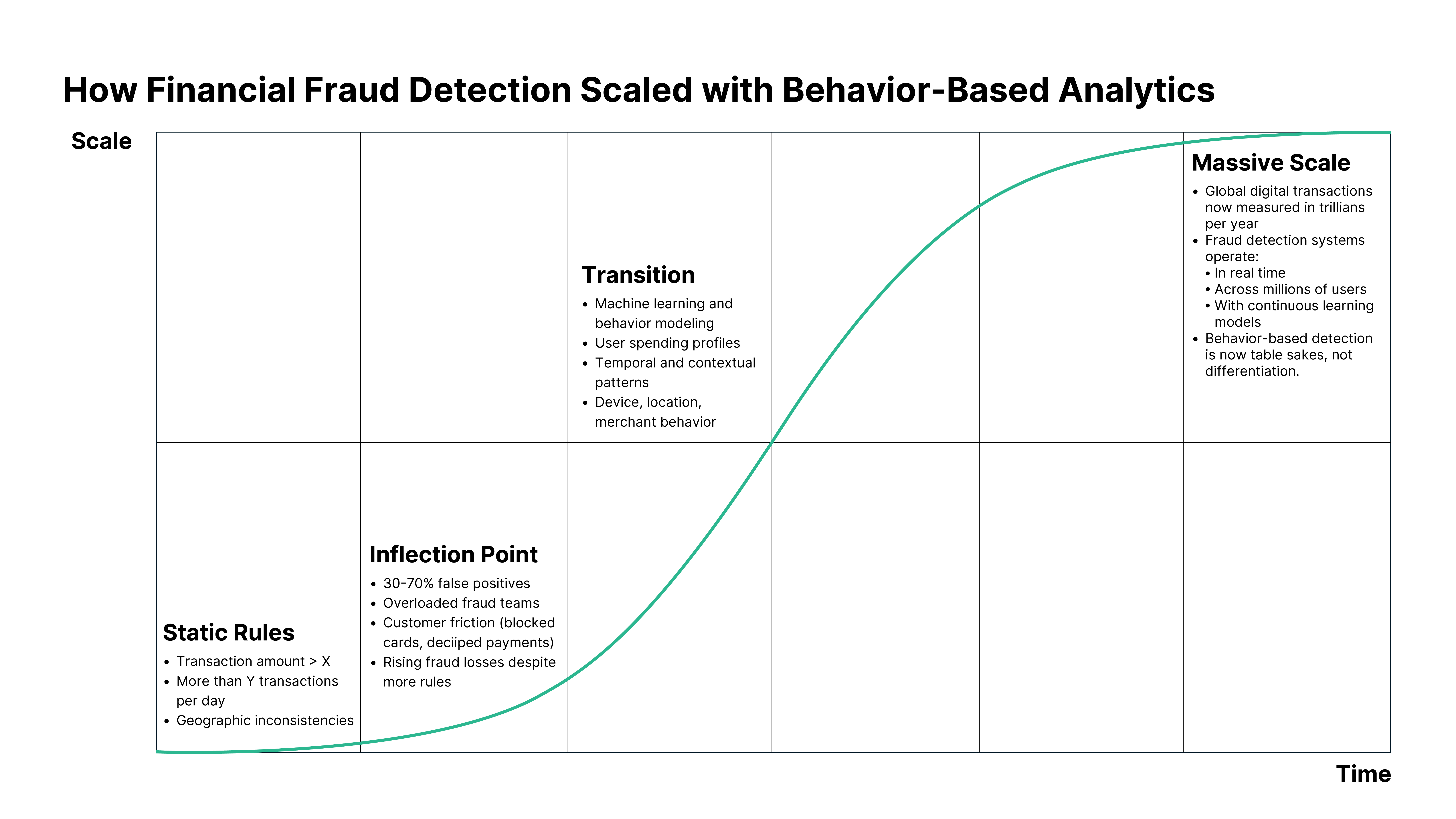

Up until the early 2000s, fraud detection systems were built on static rules and thresholds. Did a transaction exceed a set amount? Has an account made too many withdrawals in a set time? Has someone made a purchase in a new country? If so, accounts were flagged while transactions were verified.

This worked because transaction volumes and fraud sophistication were relatively low. Detection accuracy was limited, returning a high proportion of false positives, but because volumes were low, the cost of false alarms was tolerable.

The market for fraud analytics was accordingly small. Banks looked at fraud detection as a cost center. It wasn’t expected to produce value, just minimize losses. Growth, therefore, was incremental and tightly coupled to banking IT spend.

Then, seemingly overnight, everything changed. E-commerce took off. The volume of digital payments and real-time transactions exploded. Fraud patterns changed too, becoming more adaptive and faster paced.

The rules that had been good enough before were no longer nearly good enough. They returned high rates of false positives. Analysts were forced to waste time validating noise, while customer frustration mounted over blocked cards. Trust in banks’ fraud detection systems fell. If banks were so busy blocking cards over online shopping sprees, how were they supposed to stop real fraud?

This was the inflection point.

Banking is built on trust. Both missing fraud and taking action based on false positives undermine that trust. Furthermore, analysts need to be able to investigate efficiently, both for the sake of clients and the bottom line. With the critical realization that false positives were becoming as costly as actual fraud, accuracy, trust, and analyst efficiency became business-critical metrics.Fraud-detection systems had to change to meet those metrics.

These systems were applying static logic to an increasingly dynamic world. The same technological changes that were enabling a massive increase in consumer spending were also producing qualitative changes. It was easier than ever to transfer money, do business abroad, and make big purchases—all activities that triggered static thresholds. Meanwhile, this was creating new opportunities for rapidly iterative and massively scaled fraud.

For example, with a single account takeover, a criminal could only move so much money before triggering a static rule that locked the account. But having learned that rule, the criminal could take over thousands of accounts and make thousands of fraudulent transactions that stayed within the rules.

To meet these challenges, fraud-detection systems became more dynamic. Instead of asking whether transactions had crossed predefined limits, they began asking whether the behavior was different from the normal behavior for specific users.

The move to behavior-based learning changed everything. By learning individual behavior patterns over time, fraud systems reduced noise, increased detection accuracy, and allowed analysts to focus on real cases rather than rule maintenance. Trust restored, scale followed.

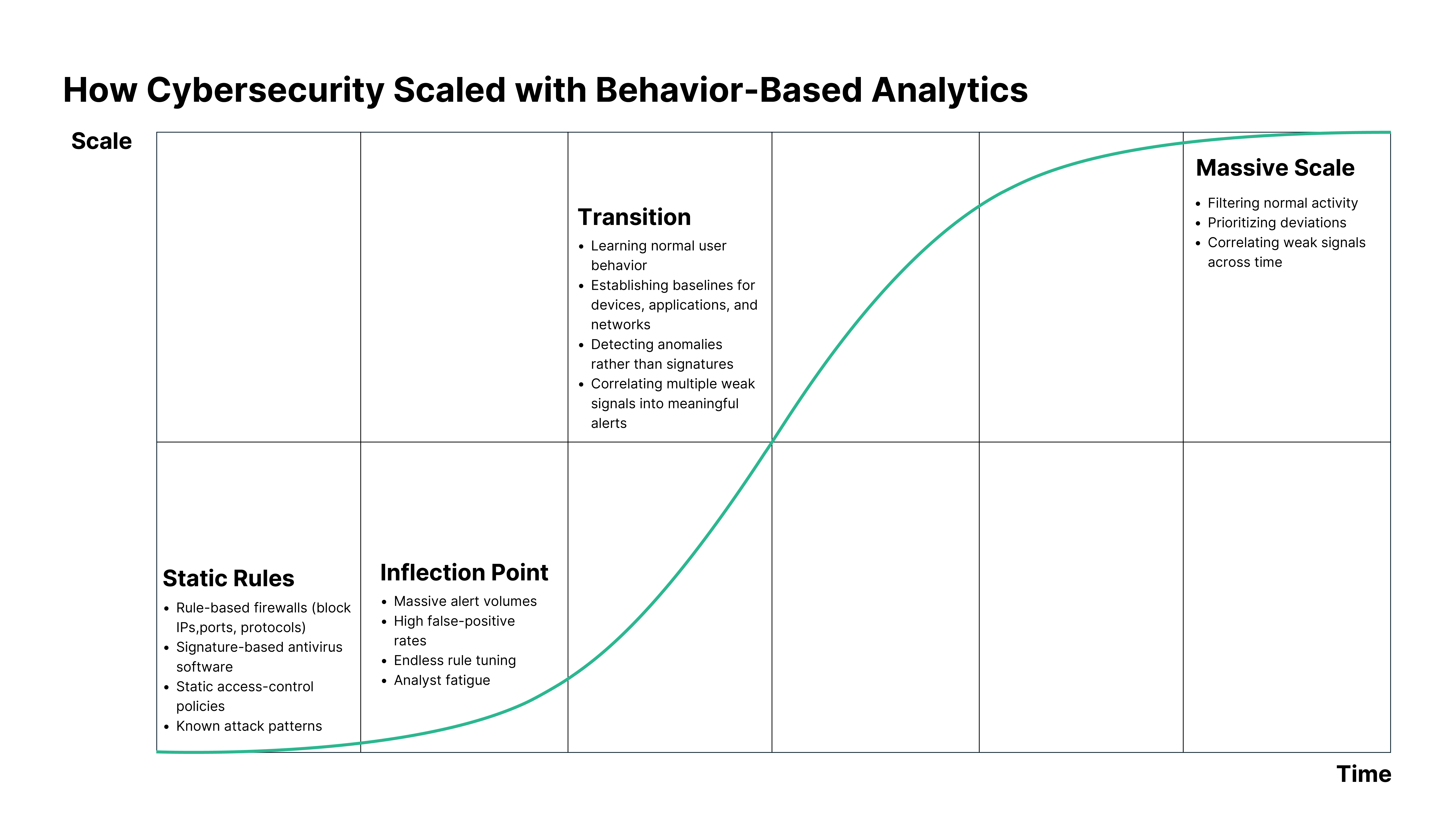

In the early days of enterprise IT, cybersecurity was built around a simple assumption: if we know what bad looks like, we can block it.For decades, that meant fixed rules, signatures, and thresholds. Rule-based firewalls blocked specific IPs, ports, and protocols. Antiviruses blocked signatures. Static access-control policies blocked bad actors. Capable administrators blocked known attack patterns.

It worked! But these systems had a fundamental limitation: they only worked for threats that were already known. Networks were smaller. Applications were fewer. Attackers reused techniques.

In the mid-2000s, as digital infrastructure expanded, three things quickly changed.

First, attack surface exploded. Cloud services, mobile devices, remote access, web applications, and third-party integrations all created new vectors of attack and new vulnerabilities. Think of it like a fortress city that’s grown beyond its walls. Those battlements that protected the older, smaller city can’t do anything for the suburbs beyond. And it’s not like closing the gates is an option—that would cut off users as well as attackers.

Second, attackers got more adaptive. Much like static financial fraud rules, static cybersecurity rules are learnable and, therefore, beatable. Polymorphic viruses, zero-day exploits, living-off-the-land attacks, and the prevalence of credential abuse all evolved to beat static rules. The fundamental assumption of cybersecurity was up: defenders no longer knew what bad looked like.

Third, noise overwhelmed security teams. Static defences were seeing threats everywhere—most of them false. Security teams were treading water, endlessly tuning rules to new threats. Meanwhile, this took time away from investigating threats. Something had to change.

Static rules were being applied to a dynamic, adversarial environment. And they were losing.

This was the inflection point.

By the late 2000s and early 2010s, leading security teams began changing how they thought about detection. Instead of asking whether activity matched a known bad pattern, they started asking whether behavior was abnormal in a given context. Instead of striving for impossible perfect protection, they focused on early detection, quick response, and minimized impact, affected through SOC operations and incident response teams.

This was the birth of behavior-based cybersecurity. Whereas static defenses had counted on knowing what bad looked like, behavior-based cybersecurity learns what typical looks like. Dispensing with the notion that anything can be trusted, these systems continuously monitor the behavior of users, devices, networks, and applications.

Beyond being simply better at dealing with dynamic threats, this approach has several advantages. By filtering normal activity, behavior-based systems dramatically reduce noise. This also allows for prioritization of deviations, and correlation of multiple small anomalies to build meaningful alerts.

Counter-intuitively, by taking an approach that looked at typical behavior first, this approach enabled security teams to focus on what mattered, rather than trying to see everything. Security tools changed accordingly. Instead of trying to do the analyst’s job, they did the watching, prioritizing, contextualizing, and surfacing incidents that needed analyst’s attention. The end result was faster decision making.

The move to behavior-based security reshaped the cybersecurity market. Spending rapidly increased as behavior-based platforms became core infrastructure. Analytics moved from optional add-on to strategic necessity. Entirely new categories emerged, such as endpoint detection and response, network traffic analysis, identity-centric security, and the transition from security information and event management to user and entity behavior analytics and extended detection and response.

Success in cybersecurity was no longer measurable in number of rules or the size of signature databases. What mattered was accuracy, analyst experience, and scalability.

In both financial fraud detection and cybersecurity, the same pattern emerged. Static rules worked but broke at scale. With scale and increased complexity, false positives exploded. This overloaded analysts with bad information, taking their time away from valuable work while also shaking their trust in the systems they used. Finally, behavior-based learning emerged as a paradigm-changing shift that opened these fields up to massive growth.

Now, 10 – 15 years later, we can see that predictive maintenance has been following the same path. To reach massive scale, predictive maintenance needs to go where financial fraud detection and cybersecurity have already gone: to behavior-based analytics.

This is a new paradigm in how vibration analysts interact with machine data. Instead of searching machine data to find problems, a learning system that understands normal machine behavior can identify abnormal machine behavior and give vibration analysts the information they need to plan maintenance and extend uptime. As in cybersecurity and financial fraud detection, the expert oversight at the center of the system remains critical, performing high-level analysis of high-value data.

The history of financial fraud detection and Cybersecurity provides several clear lessons.

The overarching lesson is clear: for predictive maintenance to scale, it will need to follow the lead set by those fields. By adapting systems to leverage behavior-based analytics, predictive maintenance can transition away from static rules, towards trustworthy, reliable, and effective monitoring of entire plants.That change starts now.

To learn more about how behavior-based analytics can make a difference in your plant, get in touch here.