Not sure if your sensor data is reliable? Add population context to it

One of the fundamentals of condition monitoring is being able to trust the data you get from your sensors. Normally, there is a set of checks that an IIoT installation goes through to become fully functional, like connectivity and authentication tests to ensure that the sensor is in the right operational state or, in other words, collecting and sending data accordingly.

But a sensor can still pass all the operational checks and still be sending misleading data. In a machine that has already been monitored for some time, this type of problem is easier to identify, as the measurements would indicate the change and, hopefully, get the attention of the person analyzing it.

It becomes trickier to detect it in a new fleet of equipment or a new sensor installation. A fleet, consisting of multiple assets, have similar machine schematics and work under similar operational and environmental conditions. So, even if not all machines entered operation at the same time, it is typically assumed that the assets and its components are in good conditions when they did. In short, all the initial measurements are typically assumed to represent the normal operational conditions.

And that’s when you can inadvertently land right into the crap in/crap out problem. As the researcher and consultant Jonas Berge explains, “if the sensor data is not good the analytics will give results that mislead maintenance, reliability, and process engineers. Similarly, if the sensor data is not good, the control strategy may take the wrong action causing a spurious trip, miss a safety demand which could be dangerous, and operators may be misled taking the wrong action.”

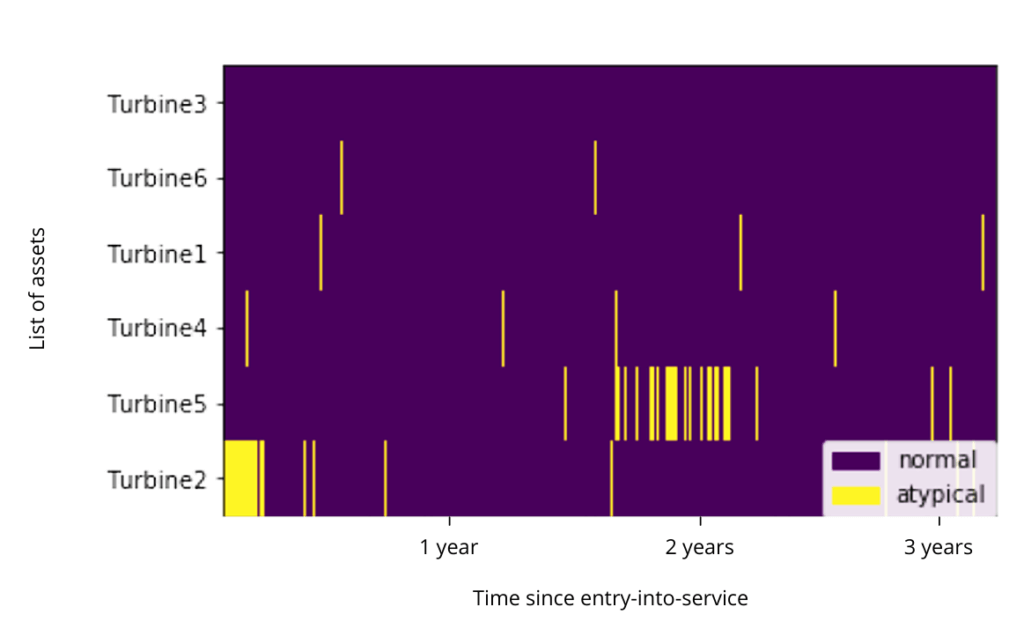

This situation was verified in an analysis performed with MultiViz Vibration over data collected from vibration sensors monitoring the bearings of shafts connecting gearboxes with power generators in a wind turbine. Vibration signals were taken every 12 hours for 46 months, and all assets and components were in good conditions.

If someone were to manually check the measurements from each machine individually, it would consider them to be the asset’s normal operating mode. A typically healthy asset will normally display one operational mode during most of its life, due to the relatively stable operation and performance of the machine in which the sensor was installed.

MultiViz Vibration, however, detected a different operational mode in one of the wind turbines, right in the beginning of its entry-into-service. After an inspection, it was discovered that the vibration sensor was not installed properly and, therefore, sending data that was not the actual vibration signals of the machine.

The key to finding it out was including the context of the entire fleet of assets to it. The analysis was done with the Blacksheep Detection feature, which analyzed data from six wind turbines in order to identify assets that behave differently from the rest of the population. It performed mode identification over the population of assets and, based on all modes identified in the population, labeled as atypical the wind turbines with the largest portion of uncommon modes.

So, being able to automatically add population context to asset data helps avoiding the risks of assuming that data from a newly installed sensor is normal. It also reinforces the ability to correctly perform condition monitoring, as the activity relies directly on the accuracy of the collected information.